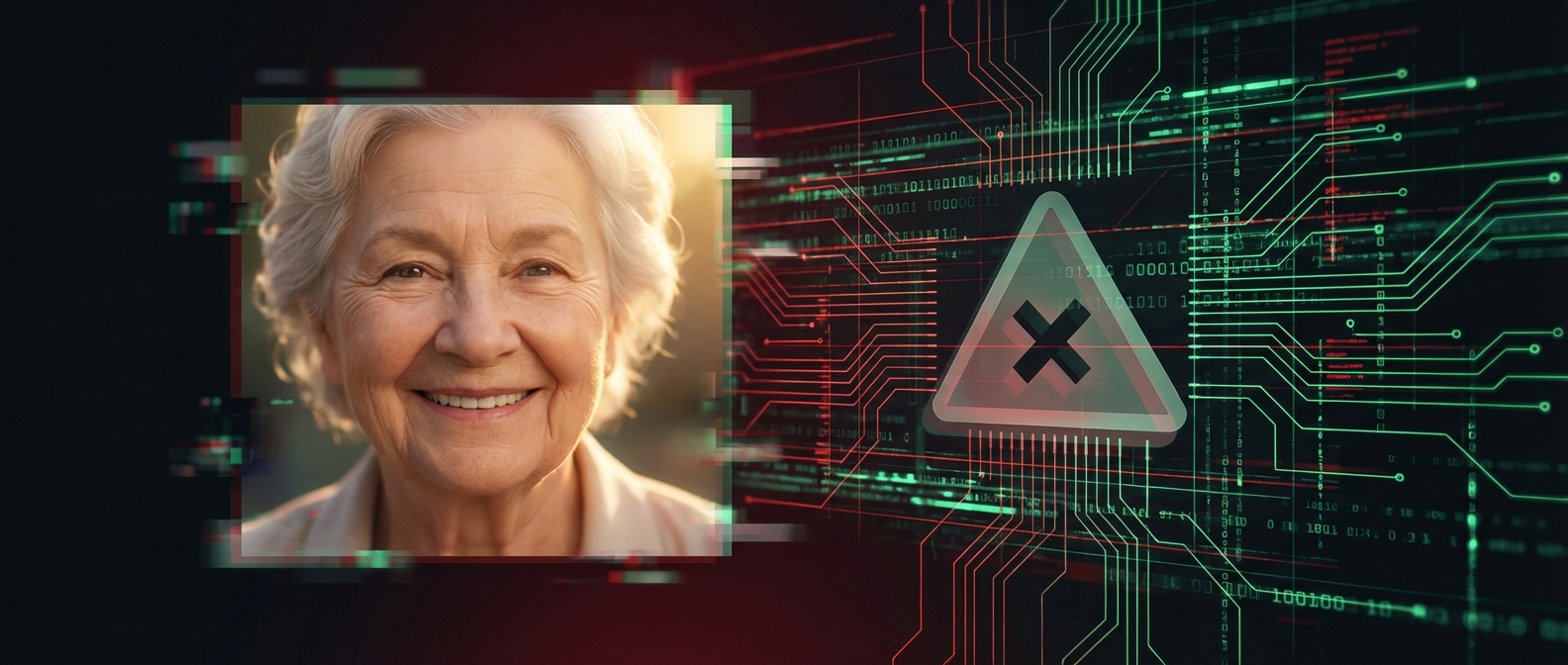

The AI Influencer Scam Targeting Your Parents: How Fake Characters Are Exploiting Older Internet Users

A thread went viral on X this week laying out, in clinical detail, how to make $20,000 to $50,000 per month by creating fake AI-generated personas and using them to sell products to older internet users who cannot tell the difference. It reads like a business plan. It has revenue numbers, demographic breakdowns, conversion rates, and case studies. And the author is not embarrassed about any of it.

The playbook is now public. If you have parents, grandparents, or anyone over 35 who uses Facebook, Instagram, or YouTube, this article is about them.

The Two-Internet Theory

The core argument of the thread is that there are two separate internets operating simultaneously:

Internet 1 is where you are reading this. People aged 18-30 on X, TikTok, Reddit. They are extremely online, aware of AI, and can spot synthetic content from the lip sync alone. They are also, as the author bluntly puts it, "the brokest demographic in human history." Negative net worth, maxed credit cards, returning half of what they buy.

Internet 2 is everyone else. People aged 35-65 on Facebook groups like "Natural Remedies for Joint Pain," YouTube tutorials about back pain, Instagram reels recommended by friends. This group controls 85% of household spending in the US. They have the highest disposable income of any living generation. They buy aggressively and return almost nothing. And they have, according to a Deloitte survey of 4,000 consumers across 6 countries, essentially zero ability to detect AI-generated content.

The author frames this as a business opportunity. We should frame it as what it actually is: a trust exploitation playbook targeting the most vulnerable internet users.

The Playbook

The operation works like this:

- Create a fake persona. An AI-generated "grandmother" who gives health tips. A "monk" sharing wellness advice. A "healer" recommending natural remedies. A "biblical wellness elder." The character is entirely synthetic. The face, the voice, the mannerisms are all generated by AI tools that cost roughly $60/month.

- Post daily content. Two educational videos for every one product video. The educational content is genuinely helpful (stretching routines, herbal remedies, sleep tips). It builds trust. The product videos then leverage that trust to recommend specific items.

- Monetize through affiliate links. The products are real things selling on Amazon or TikTok Shop. The fake persona earns 15-40% commission on every sale. No inventory, no shipping, no customer service. Just the affiliate cut.

- Automate engagement. Comments like "WELLNESS" trigger automated DM sequences that send personalized messages with product links. Click-through rates on these personalized messages are 31-41%, compared to 3-5% for generic messages.

- Scale with multiple characters. Once one character is profitable, create more. Different niches, different demographics, different platforms. One operator can run a fleet of fake personas simultaneously.

The author claims one Instagram account with 660,000 followers features a fully AI-generated grandmother character. The audience is women aged 40-60 who believe she is a real person. One operation reportedly hit $641K in gross merchandise value in a single month.

Why It Works (For Now)

The thread is correct about one thing: the detection gap is real. People aged 35-65 did not grow up with internet culture. They evaluate content the way they always have: "Is this helpful? Does this person seem trustworthy? Do I want what they are recommending?" They have no mental framework for questioning whether the person on screen is real.

This is not stupidity. It is a reasonable trust heuristic that has worked for decades. When you see a friendly grandmother sharing a recipe for turmeric tea on Facebook, why would you assume she was generated by a 23-year-old running GPT prompts in a Discord server? You would not, because until very recently, that scenario was not possible.

The technology caught up to the trust. The social norms did not.

The Ethics Section That Was Not There

The original thread has a section called "the elephant in the room" that attempts to address ethics. The argument is: TV commercials have used actors playing trustworthy characters for decades. This is the same thing, just cheaper.

That comparison falls apart immediately. TV commercials are clearly labeled as advertisements. Everyone watching knows the actor is playing a role. The entire context screams "this is a commercial." That shared understanding is what makes advertising ethical (or at least legal).

What is being described here is fundamentally different. The audience does not know the persona is fake. The trust is built on deception, not disclosure. The grandmother is not an actress playing a role. She is a fiction designed to exploit the audience's inability to detect AI content. The value proposition is literally "they cannot tell, so we can profit."

The thread even acknowledges this is time-limited. "The window is closing." Media coverage will increase. Platforms will add disclosure labels. Younger relatives will warn their parents. The operators know the exploit has an expiration date and are racing to extract maximum revenue before it closes.

When your business model requires the customer to be deceived, and you are racing against the clock before they figure it out, you are running a scam. The fact that the products are real and the health tips are decent does not change the fundamental deception.

The Demographics Being Targeted

The thread identifies specific groups with disturbing precision:

- Women aged 35-55 who control 85% of household purchasing decisions and "buy impulsively when a product solves a real daily problem"

- Menopausal women described as a "$600B+ market" who spend "$2K-$4K per year on symptom management"

- Night shift workers who "spend 23% more on health products" and are "active during late night hours"

- Church women aged 40-65 who are "loyal buyers" who "trust recommendations from church groups"

- New mothers described as "millions awake at night buying products aggressively"

Read that list again. These are not "demographics." These are people at vulnerable moments. Menopausal women dealing with real health issues. New mothers exhausted and anxious. Night workers isolated and searching for solutions. Church communities built on trust being weaponized for affiliate commissions.

The precision of the targeting is the quiet part said loud.

What You Can Do

If you have family members in these demographics:

- Talk to them about AI-generated content. Show them examples. Explain that the person in a video might not be real. This is an uncomfortable conversation but it is necessary.

- Teach the "comment to DM" red flag. Any account that asks you to comment a keyword to receive a message is running an automated sales funnel. That is not a person responding to you.

- Check health advice sources. If a Facebook "grandmother" is recommending supplements, look up whether that person exists outside of that platform. Check for a real website, a real name, a real practice.

- Report fake accounts. Instagram, TikTok, Facebook, and YouTube all have reporting mechanisms for impersonation and misleading content. Use them.

The Bigger Problem

The thread is a symptom, not the disease. AI-generated personas are going to get better, cheaper, and harder to detect. The grandmother that looks slightly off today will be indistinguishable from a real person in six months.

Platforms need to solve this at the infrastructure level. Content provenance (proving where a video came from), mandatory AI disclosure labels, and authentication systems for real creators are all technically possible. Most platforms are moving slowly because engagement from AI content is still engagement, and engagement drives ad revenue.

Until platforms act, the burden falls on the people who understand the technology to protect the people who do not. That is an unfair distribution of responsibility, but it is the one we have.

The playbook is public now. The operators are already running. The question is whether we let this become the norm or whether we treat it as what it is: industrialized deception targeting people who trust what they see on the internet because nobody told them not to.

Support independent AI writing

If this was useful, you can tip us with crypto

Base (USDC)

0x74F9B96BBE963A0D07194575519431c037Ea522A

Solana (USDC)

F1VSkM4Pa7byrKkEPDTu3i9DEifvud8SURRw8niiazP8